This is just one possible scenario that you could use create a Layer 2 stretch network in your hybrid cloud environment. The goal is to understand this scenario and to have a place to ask questions. The plan is to introduce additional scenarios to drive understanding. This scenario is using AOS 6.6. The scenario will mostly likely change with newer AOS releases.

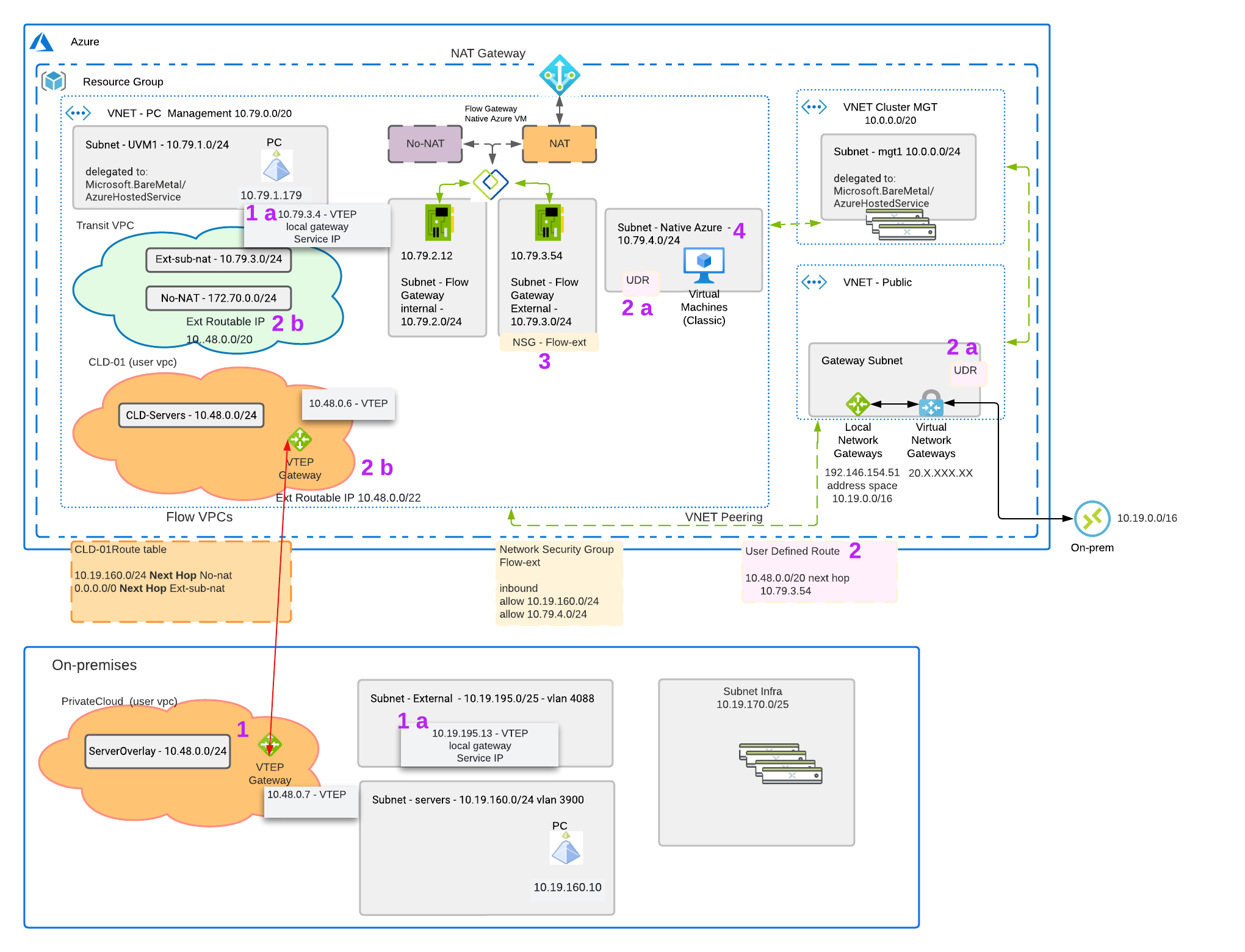

We have an Azure environment on the top the below diagram and private datacenter below the Azure environment. We want to understand what happens if the VMs running on Nutanix in Azure failback/migration to the private datacenter while using layer 2 stretch. After the failover, how will the native Azure VMs in Step 4 reach the VMs that failed/migrated. The VMs running the NC2 cluster in Azure are using a routed path to get access. The means we have ability to route on-prem an native Azures services to the VMs running on the NC2 cluster.

Step 1 – L2

We have a setup a layer 2 stretch between Azure and the private datacenter. We are using a VTEP gateway which is great when already have a VPN setup. The VTEP gateway doesn’t add additional encryption overhead on the solution. The VTEP gateway in Azure is using an IP from the User VPC(Step 1A) it which it’s stretching and the Flow External Gateway subnet. The Flow Gateway is used to provide north and south traffic for the VMs running on the cluster in Azure. This External subnet provides native Azure IPs. These IPs allow NATed traffic and other services to easily get access to the rest of the Azure network. AHV/CVMs and Prism Central are not in the network path of the Flow Gateway.

In this example each side of the stretched network has it’s local gateway in the user VPC set to the local gateway address. This means that traffic is not tromboning through one side. There are use cases where you might want to trombone the traffic.

Traffic exiting the Flow User VPC in the private datecenter also uses a external network (Step 1 A). This external network is a dedicated VLAN backed network. This external network is where the private datacenter VTEP grabs the address for routing.

Step 2 – Routing Traffic in a non routed (No-NAT) setup to VMs running on NC2 on Azure.

In Step 2 we need to set a User Defined Route in Azure. This User Defined Route(UDR) is the CIDR of the VMs running on our Nutanix Cluster in Azure. In our example we are setting traffic going to 10.48.0.0/20 to have next hop to 10.79.3.54. 10.79.3.54 is the external nic of the Flow Gateway VM. The UDR is just a new route table with the routes you wanted listed in Azure.

Step 2a

Once you have your new route table you have to assign it to the subnets where you want to redirect traffic. In our example we have two places we are changing the default routes. In the VNET – Public we set the gateway subnet route table to our newly recreated UDR so we can route on-prem traffic to the NC2 cluster. We also change the Native Azure subnet route table in the PC Management VNet so we can route native Azure VMs to the NC2 cluster.

Step 2b

In order to redirect the incoming traffic from the Flow Gateway to the NC2 VMs you need to set externally routable prefix list (ERP). You configure the externally routable prefix list (ERP) in the transit VPC for the entire range of user VM IPs across all user VPCs that need to get routed (No-NAT) access. This is done in Prism Central. You also set an ERP in the user VPC which is a subset of the range of set in the Transit VPC.

Step 3

We want to make sure that we edit the Network Security Group (NSG) on the Flow Gateway to allow traffic from the Native Azure subnets and from on-prem.

Step 4

The VMs in the native Azure subnet are routing thru the Flow Gateway VM to get VMs on 10.48.0.0/24 in Azure and in the private datacenter. Since UDRs take precedence over routes that come from peering VNets, this will always be the case.

In a planned migration for VMs going to and from the Azure and the private datacenter the traffic path remains the same. Once a planned migration happens the VMs in the native Azure subnet will still flow through the flow gateway and the VTEP.

In an unplanned migration, if the cluster fails you would have to remove the UDR to allow traffic to flow over the virtual network gateway. Once the UDR is removed the VMs in the native Azure subnet will start to work again. It’s probably more likely that the whole AZ is impacted and this won’t matter but that’s how that scenario would work.

Do you a scenario you want to walk through? Please ask or clarify below.