Hi Folks,

I have NX-8170-G10 configured with 1x 2port 10G copper, 2x 2port 25G Fibre NICs. I need to make the following configurations.

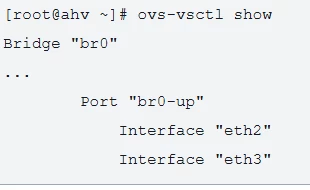

1x 2 port Copper : Active-Backup e.g. br0-up

1x 2 port Fibre : Active-Active (balanced-tcp) in LACP 1 e.g. br0-up1

1x 2 port Fibre : Active-Active (balanced-tcp) in LACP 2 e.g. br0-up2

I have the followig questions.

- Do I need seperate Bridge for each uplink

- I used the following commands on AHV for creating the uplink, but I am getting warnings on Prism Element

- ovs-vsctl add-bond br0 br0-up2 eth4 eth5

ovs-vsctl set port br0-up2 other_config:lacp-fallback-ab=true

ovs-vsctl set port br0-up2 other_config:lacp-time=fast

ovs-vsctl set port br0-up2 bond_mode=balance-tcp

ovs-vsctl set port br0-up2 lacp=active - and the same commands for br0-up1