One of my CVMs is throwing this alert:

CVM threshold of 98 Percent Disk IO exceeded

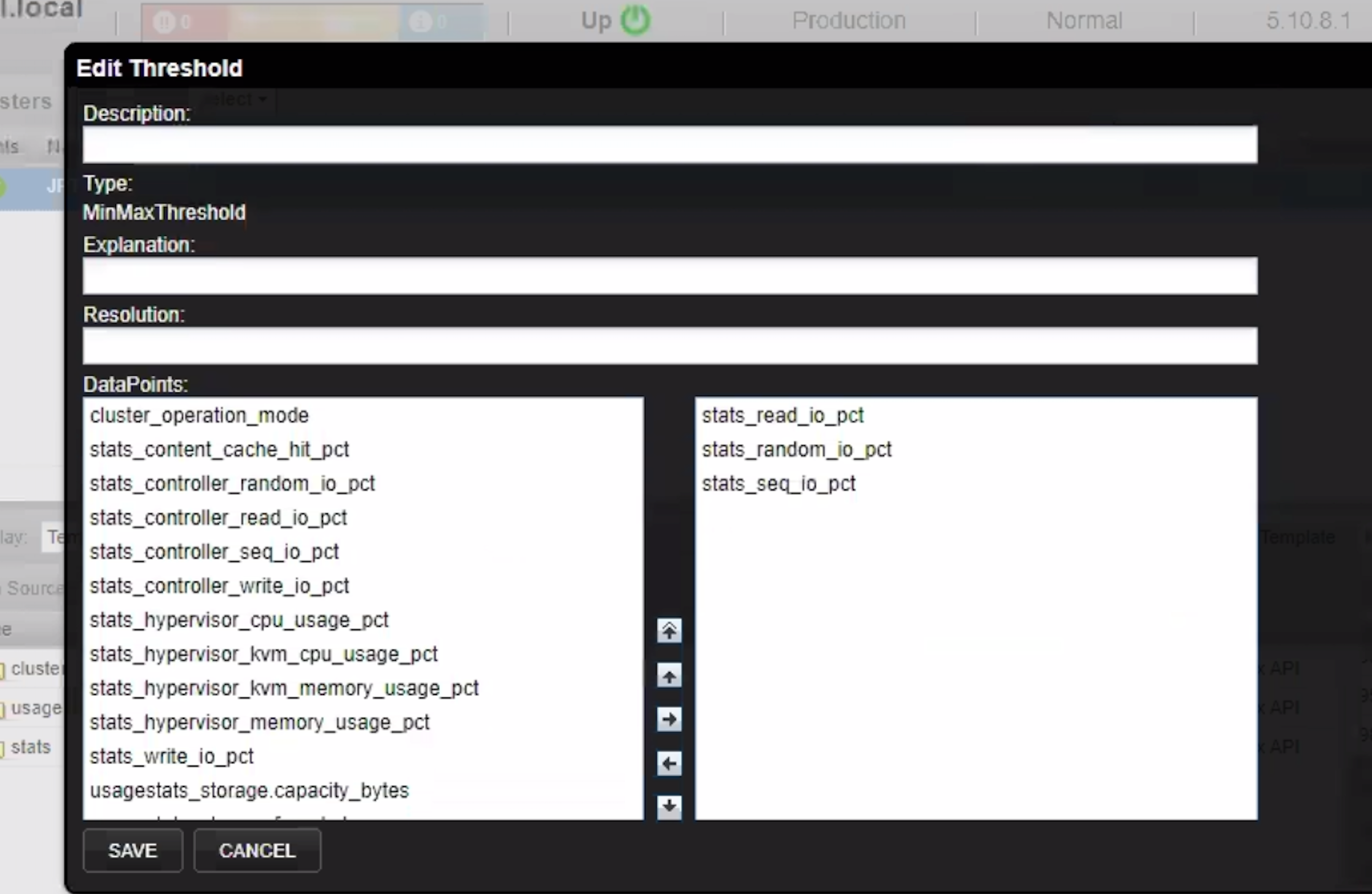

I can’t find anything published on this alert anywhere… would like some assistance understanding this and how to mitigate it..

Ran a complete NCC check and it came back clean, just 1 warning.

Best answer by theGman

View original