I've tried creating the VM using the files from Fortinet but no instructions when using AHV per this guide:

https://s3.amazonaws.com/fortinetweb/docs.fortinet.com/v2/attachments/5795878a-1f78-11e9-b6f6-f8bc1258b856/fac-vm-install-guide-43.pdf

The files I've used are the following:

- KVM - fackvm.qcow2, datadrive.qcow2

- Vmware - fac.vmdk, datadrive.vmdk

- screenshot attached of my options from Fortinet

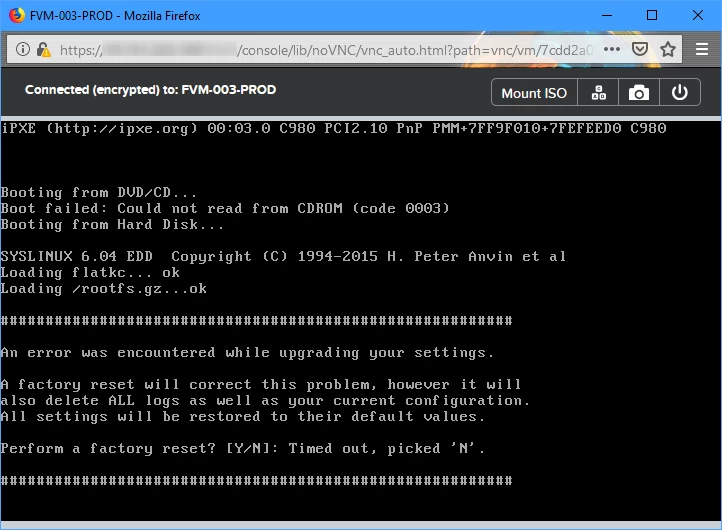

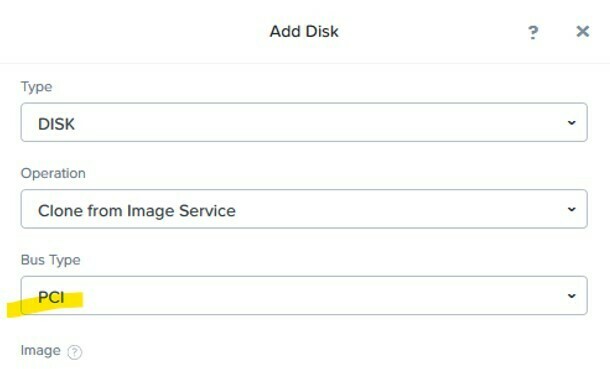

- Created vm adding cloned disk using the files above

- Set vCPU, Mem, added NICs

Best answer by MMSW_DE

View original