Has anyone ever attempted a Host book disk repair and encountered this issue before?

All cables and SFPs are plugged in as if it were still in production. Have not yet attempted to rebuild via foundation as these were the steps provided by Nutanix support: https://portal.nutanix.com/page/documents/details?targetId=Hypervisor-Boot-Drive-Replacement-Platform-v510-Multinode-G3G4G5:Hypervisor-Boot-Drive-Replacement-Platform-v510-Multinode-G3G4G5

Steps Taken:

- Downloaded and burnt phoenix.iso to USB

- Booted USB into Phoenix

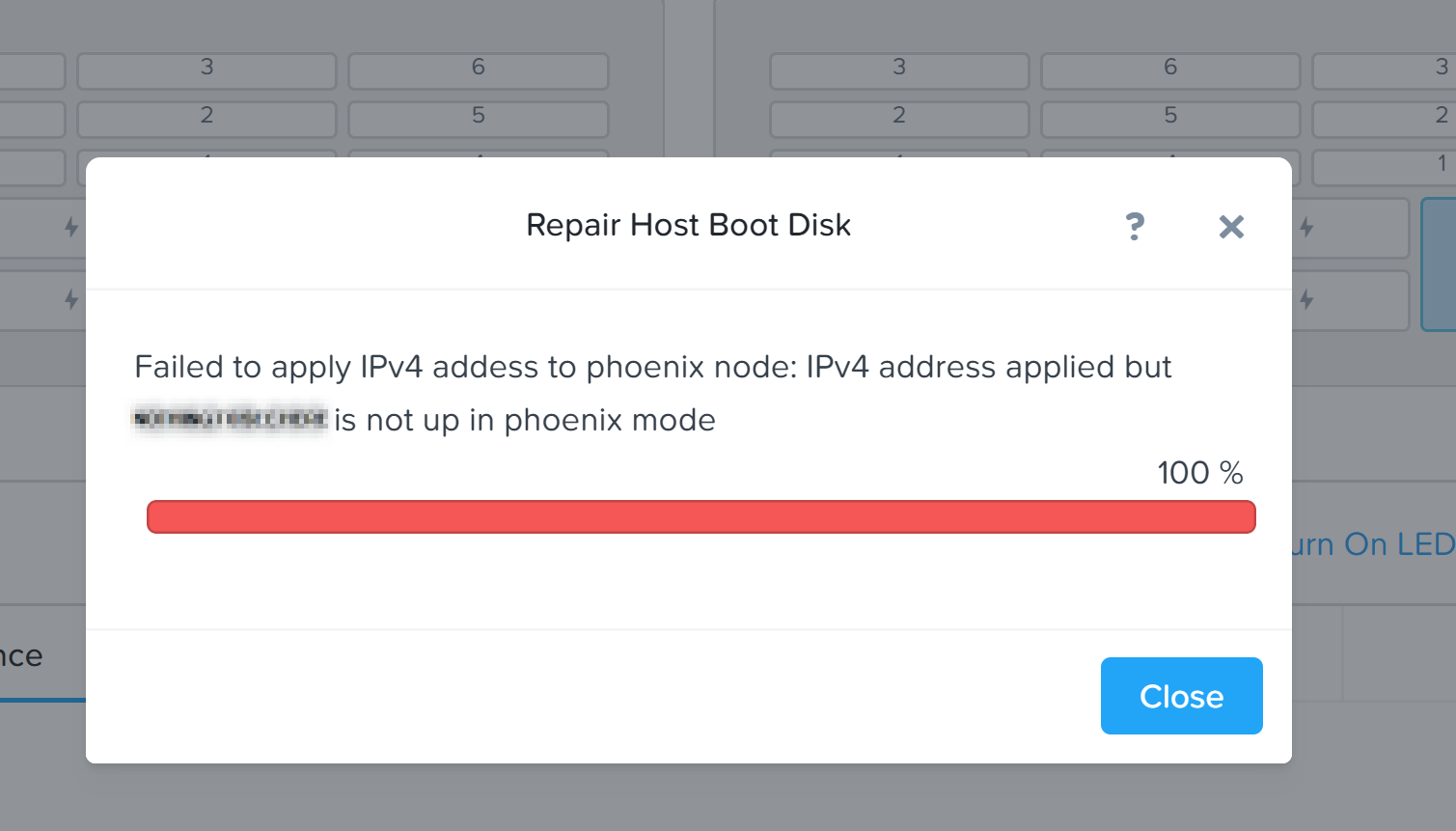

- Kicked off Repair Host boot Disk and uploaded whitelisted ESXi image

- Encountered error

I have a case open with support and have escalated and figured that while i’m waiting I could ask the community. Will provide a response here once we figure out the issue.

Cheers All!

(Edit: I posted this in the CE forum by accident)

Best answer by j_seet

View original