When do you use Compression?

Is anyone using compression? If so, for which workloads and what is the experience like on your cluster?

This topic has been closed for comments

I have a customer who's using it with great success for databases.

Dedup wasn't getting the job done (the same was true when he was on NetApp).

Compression helped things out, with no noticeable performance impact.

Sylvain.

Dedup wasn't getting the job done (the same was true when he was on NetApp).

Compression helped things out, with no noticeable performance impact.

Sylvain.

That's helpful, thanks. What kinds of databases? What size were they? What kind of space savings are you seeing?

As far as I know, dedupliction is only on the hot tier in NOS 3.5.x. So we won't see any savings by employing deduplication until Nutanix releases NOS 4.0.

As far as I know, dedupliction is only on the hot tier in NOS 3.5.x. So we won't see any savings by employing deduplication until Nutanix releases NOS 4.0.

Databases were Oracle 11g, as I recall, size was in the 200-400Gb range.

I don't know about the space savings, I'll have to check with them, but they were happy enough.

I'll get back to you if/when I get the chance to see them.

Sylvain.

I don't know about the space savings, I'll have to check with them, but they were happy enough.

I'll get back to you if/when I get the chance to see them.

Sylvain.

+5

+5

+4

+4

I wouldn't use inline compression for databases. At most I'd use post process compression with a long delay time. Great for the type of databases where you're just storing stuff for a long time and not writing to it again.

+3

+3

Hi,

have been running Nutanix for a couple of months now and have successfully used inline compression for our SQL DB servers.

I have moved about fifteen 0.5 to 1 TB big VM's over from a very competent NetApp enviornment and I have seen no performance impact of compression. I use PAGE compression within SQL server and I have still been able to get 1.8 : 1 in compression ration.

I have made extensive tests both with and without compression and I have seen no negative effect of the inline compression so far. General load of the cluster is still low as I have a 8 node 6060 cluster, my only concern is what happens when CPU load on the cluster increases. Remains to be seen.

have been running Nutanix for a couple of months now and have successfully used inline compression for our SQL DB servers.

I have moved about fifteen 0.5 to 1 TB big VM's over from a very competent NetApp enviornment and I have seen no performance impact of compression. I use PAGE compression within SQL server and I have still been able to get 1.8 : 1 in compression ration.

I have made extensive tests both with and without compression and I have seen no negative effect of the inline compression so far. General load of the cluster is still low as I have a 8 node 6060 cluster, my only concern is what happens when CPU load on the cluster increases. Remains to be seen.

+5

+5

Hi there.

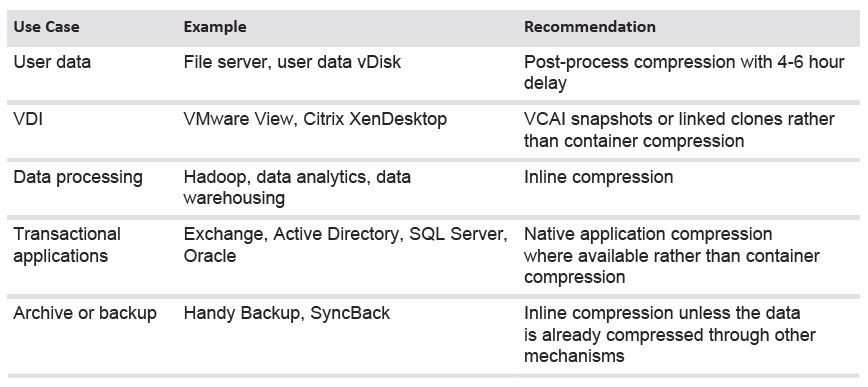

I've been trying to understand compression. Looking on NutanixBible, I can read this about inline compression.

No impact to random I/O, helps increase storage tier utilization. Benefits large or sequential I/O performance by reducing data to replicate and read from disk.

However, when looking at the

In that case, Inline compression should only be used for dump databases where data are written once/never read ?! Are there any comparison benchmarks about real (and not feel) performance impact ? I can't say to a customer "use compression, it doesn't feel worse" ... 😉

I've been trying to understand compression. Looking on NutanixBible, I can read this about inline compression.

No impact to random I/O, helps increase storage tier utilization. Benefits large or sequential I/O performance by reducing data to replicate and read from disk.

However, when looking at the

In that case, Inline compression should only be used for dump databases where data are written once/never read ?! Are there any comparison benchmarks about real (and not feel) performance impact ? I can't say to a customer "use compression, it doesn't feel worse" ... 😉

+2

+2

Hello,

Can anyone please post your recent experiences and/or recommendations regarding Compression and Deduplication? We're setting up our first VMware vSphere environment on Nutanix (AOS 5.5) that will run mainly database workloads (Oracle and MSSQL) and a few application servers. I've read quite a few postings and Nutanix documentations on this topic but there seems to be a lot of contradictions/disagreements so I'm still confused as to which direction to go. Thank you.

Can anyone please post your recent experiences and/or recommendations regarding Compression and Deduplication? We're setting up our first VMware vSphere environment on Nutanix (AOS 5.5) that will run mainly database workloads (Oracle and MSSQL) and a few application servers. I've read quite a few postings and Nutanix documentations on this topic but there seems to be a lot of contradictions/disagreements so I'm still confused as to which direction to go. Thank you.

Enter your username or e-mail address. We'll send you an e-mail with instructions to reset your password.