This post was authored by Michael Haigh, Technical Marketing Engineer Nutanix

Whether you’re using traditional VM based infrastructure, or newer container based infrastructure, having a way to implement Role Based Access Control (RBAC) is absolutely critical. Implementing RBAC for your Nutanix Karbon Kubernetes clusters is straightforward once you understand Kubernetes RBAC authentication.

The previous link has great information and resources, but to summarize, there are four main objects that are relevant:

- A Role grants access to Kubernetes resources in a single namespace. For instance, you might create a Role named pod-reader which only has read access to pods in the defaultnamespace.

- A ClusterRole grants access to Kubernetes resources cluster wide, irrespective of namespace.

- A RoleBinding ties the permissions granted via a Role to a specific user or set of users. So if you had a user named Paul who you wanted to give rights to read pods in a single namespace, you first create the pod-reader Role, and then bind that Role to Paul via a RoleBinding.

- A ClusterRoleBinding ties the permissions granted via a ClusterRole to a specific user or set of users. Since its binding a ClusterRole, this grants permissions cluster ride, rather than being tied to a single namespace.

In this blog post, we’ll be creating some fictitious users with varying responsibilities and Kubernetes cluster access needs. We’ll then walk through applying those roles to a Karbon Kubernetes cluster and see how the end users can access the kubeconfig via Nutanix Prism.

Defining our Users

In this example, you’re the Infrastructure Admin, where one of your main responsibilities is managing all of your Nutanix infrastructure. You also have 4 users you wish to support:

- Alice is your Kubernetes expert, so you wish to give her full admin access for the entire Kubernetes cluster.

- Dave is a developer within your organization, so you wish to give him the ability to deploy and manage workloads for entire the entire Kubernetes cluster.

- Olivia and Owen are in operations in your organization, so they need to be able to manage workloads for an individual namespace within the Kubernetes cluster. Additionally, there may be more members of the operations team added later, so you wish to manage them with an Active Directory Group, rather than an individual User.

Creating our Kubernetes (Cluster) Roles and Role Bindings

Now that we have our users defined, it’s time for you, as the Infrastructure Admin, to create the appropriate roles and role bindings in our Kubernetes cluster. We’ll first create the appropriate yaml for Alice, our Kubernetes Expert.

[infra admin]$ cat << EOF > alice-rbac.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: alice-admin-CRB

subjects:

- kind: User

name: alice@nutanixdemo.com # AD User Name

apiGroup: rbac.authorization.k8s.io

roleRef:

kind: ClusterRole

name: cluster-admin # must match the name of a Role or ClusterRole to bind to

apiGroup: rbac.authorization.k8s.io

EOFSince we’re granting Alice access to the entire Kubernetes cluster, and not a single namespace, we’ll need to use a ClusterRole and a ClusterRoleBinding. In this example we’re binding the user alice@nutanixdemo.com to the ClusterRole named cluster-admin. However, you’ll likely note, we’re only defining a ClusterRoleBinding, and not the cluster-admin ClusterRole. This is because cluster-admin is a pre-defined ClusterRole within Kubernetes:

[infra admin]$ kubectl get clusterrole cluster-admin

NAME AGE

cluster-admin 102mAs an exercise for the reader, run “kubectl get clusterrole” to view all the roles already defined in the cluster, and “kubectl describe clusterrole/cluster-admin” to view detailed information about the pre-defined admin ClusterRole.

Next up we have Dave our developer:

[infra admin]$ cat << EOF > dave-rbac.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: development-CR

rules:

- apiGroups: ["", "apps", "batch", "extensions"]

resources: ["services", "endpoints", "pods", "secrets", "configmaps", "deployments", "jobs"]

verbs: ["get", "list", "watch", "create", "update", "patch", "delete"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: dave-development-CRB

subjects:

- kind: User

name: dave@nutanixdemo.com # AD User Name

apiGroup: rbac.authorization.k8s.io

roleRef:

kind: ClusterRole

name: development-CR # must match the name of the Role to bind to

apiGroup: rbac.authorization.k8s.io

EOFBreaking this yaml down, we’re first creating a ClusterRole named development-CR. We’re assigning that ClusterRole a variety of rights via the rules section. Next, we’re creating a ClusterRoleBinding which binds our user dave@nutanixdemo.com to our development-CR role.

If another developer (Dale) were to start in your organization, which of the above pieces would you have to create again? If you answered “only the ClusterRoleBinding,” you’re correct. Our ClusterRole object can be used over and over, so all we need to do is create a new ClusterRoleBinding to bind Dale to the already existing ClusterRole.

For smaller organizations or teams, adding users individually may be feasible. However there are plenty of instances where this method does not scale. Let’s go through our next example where we add Owen and Olivia via an AD group:

[infra admin]$ cat << EOF > ops-rbac.yaml

apiVersion: v1

kind: Namespace

metadata:

name: ops

---

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

namespace: ops

name: operations-role

rules:

- apiGroups: ["", "apps", "batch", "extensions"]

resources: ["services", "endpoints", "pods", "pods/log", "configmaps", "deployments", "jobs"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

namespace: ops

name: operations-RB

subjects:

- kind: Group

name: operations # AD Group Name

apiGroup: rbac.authorization.k8s.io

roleRef:

kind: Role

name: operations-role # must match the name of the Role to bind to

apiGroup: rbac.authorization.k8s.io

EOFThe first part of the above yaml first defines the Namespace that we’ll utilize for the Role and RoleBinding. If your cluster already has the namespace created, then please omit that portion.

The next section is defining the Role, note under the metadata section we’re defining the namespace (ops) that this Role belongs to. The rules section is the same as the previous example, but feel free to tweak for your environment.

Finally, we have the RoleBinding, which is binding our operations AD Group to our operations-role. Again, we’re specifying that this RoleBinding belongs in the ops namespace.

Now that we’ve defined all three of our RBAC yamls, let’s go ahead and apply them to our cluster:

[infra admin]$ kubectl apply -f alice-rbac.yaml -f dave-rbac.yaml -f ops-rbac.yamlConfiguring Prism Central

Now that we’ve properly configured our Kubernetes cluster, we need to add our users in Prism Central so they have the ability to log in (or use karbonctl) to download kubeconfig files. As an infrastructure admin, you likely do not want to grant your Kubernetes users access to modify your storage or virtual machines. The good news is that you can simply add your users as Prism Viewers, which grants them read-only access. With this access level, they’ll be able to download kubeconfig files, but they’ll be unable to modify anything.

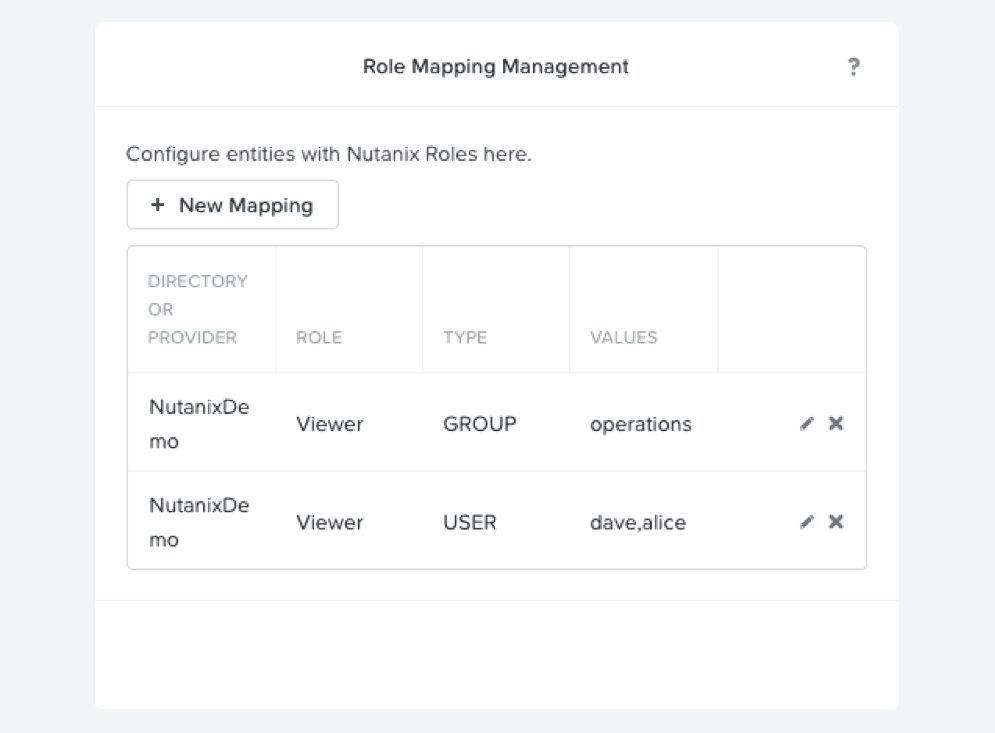

With your infrastructure admin account, log in to Prism Central, select the gear icon in the upper right, and select Role Mapping in the left column. For each User or Group, select the + New Mapping button, and fill out the appropriate fields. Be sure to select the Role as Viewer, and comma separate your Users. Once complete, your role mapping summary should look similar to this:

Once configured, instruct your end users to log in to https://PC-IP:9440.

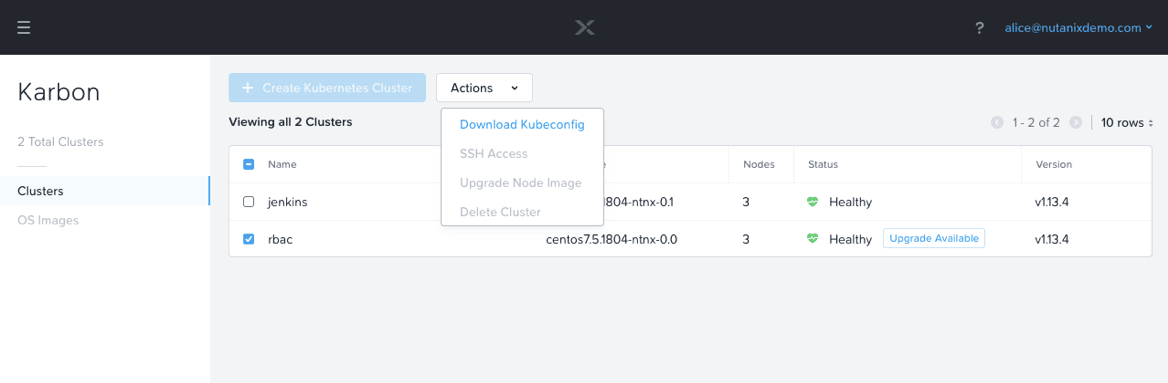

Once your end user is logged in, you’ll note that the only option available to the user is to download kubeconfig files. They’ll be able to download a kubeconfig to any Karbon Kubernetes cluster, however, unless an administrator has created a role and a role binding for that particular user, the kubeconfig will not provide access.

Validating our Configurations

Now that we’ve successfully configured both our Kubernetes cluster and our Prism Role Mappings, let’s run through some example kubectl commands to validate that everything is working as expected. We’ll go through three of our users in the same order as above (Alice, Dave, Olivia).

[alice]$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

karbon-rbac-45ff21-k8s-master-0 Ready master 4h27m v1.13.4

karbon-rbac-45ff21-k8s-master-1 Ready master 4h27m v1.13.4

karbon-rbac-45ff21-k8s-worker-0 Ready node 4h25m v1.13.4

karbon-rbac-45ff21-k8s-worker-1 Ready node 4h25m v1.13.4

karbon-rbac-45ff21-k8s-worker-2 Ready node 4h25m v1.13.4

[alice]$ kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 172.19.0.1 443/TCP 4h27m

[alice]$ kubectl run nginx --image=nginx --port=80

deployment.apps/nginx created

[alice]$ kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-57867cc648-vckb9 1/1 Running 0 19s

[alice]$ kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

kube-apiserver-rbac-k8s-master-0 3/3 Running 0 4h28m

kube-apiserver-rbac-k8s-master-1 3/3 Running 0 4h28m

kube-dns-5db657c5d6-t7rn8 3/3 Running 0 4h27m

kube-flannel-ds-j4hm9 1/1 Running 0 4h27m

kube-flannel-ds-n6sjr 1/1 Running 0 4h27m

kube-flannel-ds-t8nv8 1/1 Running 0 4h27m

kube-flannel-ds-v7vtm 1/1 Running 0 4h27m

kube-flannel-ds-zhj54 1/1 Running 0 4h27m

kube-proxy-ds-b62js 1/1 Running 0 4h27m

kube-proxy-ds-bxczq 1/1 Running 0 4h27m

kube-proxy-ds-pt7mg 1/1 Running 0 4h27m

kube-proxy-ds-xk8m7 1/1 Running 0 4h27m

kube-proxy-ds-zvfck 1/1 Running 0 4h27mAs expected, Alice has full cluster admin access, she can view (and modify) nodes, create pods, and view pods in all namespaces. Next, let’s look at Dave.

[dave]$ kubectl get nodes

Error from server (Forbidden): nodes is forbidden: User "dave@nutanixdemo.com" cannot list resource "nodes" in API group "" at the cluster scope

[dave]$ kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 172.19.0.1 443/TCP 4h35m

[dave]$ kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-57867cc648-vckb9 1/1 Running 0 6m16s

[dave]$ kubectl run nginx --image=nginx --port=80 -n ops

deployment.apps/nginx created

[dave]$ kubectl get pods -n ops

NAME READY STATUS RESTARTS AGE

nginx-57867cc648-7rsm5 1/1 Running 0 62sNote that our first command appropriately fails, as we did not grant Dave access to view or modify nodes. However, the other commands all succeed, regardless of the namespace. Finally, let’s view Olivia’s available actions.

[olivia]$ kubectl get nodes

Error from server (Forbidden): nodes is forbidden: User "olivia@nutanixdemo.com" cannot list resource "nodes" in API group "" at the cluster scope

[olivia]$ kubectl get pods

Error from server (Forbidden): pods is forbidden: User "olivia@nutanixdemo.com" cannot list resource "pods" in API group "" in the namespace "default"

[olivia]$ kubectl get pods -n ops

NAME READY STATUS RESTARTS AGE

nginx-57867cc648-7rsm5 1/1 Running 0 12m

[olivia]$ kubectl describe pod nginx -n ops

Name: nginx-57867cc648-7rsm5

Namespace: ops

...

[olivia]$ kubectl run nginx-olivia --image=nginx --port=80 -n ops

Error from server (Forbidden): deployments.apps is forbidden: User "olivia@nutanixdemo.com" cannot create resource "deployments" in API group "apps" in the namespace "ops"

[olivia]$ kubectl get deployments -n ops

NAME READY UP-TO-DATE AVAILABLE AGE

nginx 1/1 1 1 20m

[olivia]$ kubectl delete deployment nginx -n ops

Error from server (Forbidden): deployments.extensions "nginx" is forbidden: User "olivia@nutanixdemo.com" cannot delete resource "deployments" in API group "extensions" in the namespace "ops"Again, we first see that Olivia is unable to view node information, but contrary to Dave, we see Olivia cannot view pods in the default namespace. Recall that Olivia is granted access via a Role and a RoleBinding, which was limited to the ops namespace. In later commands, we see she has privileges view resources in this namespace, but no ability to create new deployments, or delete deployments that were created by Dave.

Back as our infrastructure admin, there are several other commands that are very useful:

[infra admin]$ kubectl auth can-i list pods

yes

[infra admin]$ kubectl auth can-i list pods --as alice@nutanixdemo.com

yes

[infra admin]$ kubectl auth can-i --list --as alice@nutanixdemo.com

[infra admin]$ kubectl auth can-i --list --as dave@nutanixdemo.comAdditionally, there are many great open source tools to aid in determining RBAC and associated resources for your Kubernetes clusters. For next steps, please check out KB-7457 to see how to refresh your Karbon kubeconfig via the command line.

Conclusion

The ability to provide Role Based Access Control for any of your company’s infrastructure is critically important. Thankfully, the combination of built in Kubernetes RBAC and Prism Read Only Users allows for extremely granular control over your Karbon Kubernetes clusters and resources.

©️ 2019 Nutanix, Inc. All rights reserved. Nutanix, the Nutanix logo and the other Nutanix products and features mentioned herein are registered trademarks or trademarks of Nutanix, Inc. in the United States and other countries. All other brand names mentioned herein are for identification purposes only and may be the trademarks of their respective holder(s).